According to Futurism, the Alaska Court System is preparing to publicly launch its AI chatbot, the Alaska Virtual Assistant (AVA), in late January after more than a year in development—a project originally slated to take just three months. The bot, built in collaboration with lawyer Tom Martin’s company LawDroid, is designed to help people navigate probate court forms. During testing, it consistently hallucinated, providing false information like directing users to a non-existent law school in Alaska. User feedback found the bot’s feigned empathy and condolences to be grating and unhelpful. Project leaders Aubrie Souza of the National Center for State Courts and Stacey Marz of the Alaska Court System admit they’ve had to significantly shift their goals for the tool, abandoning hopes it could replicate human court facilitators due to persistent inaccuracies.

The hallucination problem is the product

Here’s the thing: this story isn’t an outlier. It’s basically the blueprint for every rushed, under-baked government or institutional AI project happening right now. The core failure is fundamental. The developers built a system that was “not supposed to actually use anything outside of its knowledge base,” but the LLM-powered chatbot did exactly that, making up facts with cheerful confidence. That’s not a bug you can just patch out; it’s the inherent nature of the technology they chose. So you’re left with a “virtual assistant” for a sensitive, legally consequential process that cannot be trusted to tell the truth. What’s the point? It seems like the entire value proposition collapses right there.

Lowered expectations as a launch strategy

Now, the most telling part is the official response. They didn’t solve the hallucination problem. They just lowered their expectations. Stacey Marz’s quote is a masterpiece of bureaucratic disillusionment: “We wanted to replicate what our human facilitators… are able to share with people. But we’re not confident that the bots can work in that fashion.” After all the hype about AI revolutionizing and democratizing access, the conclusion is that the tech can’t do the core job they envisioned. So what *is* it launching to do? The article is vague, but it sounds like a severely limited information fetcher that they hope will fail less spectacularly. That’s a pretty grim milestone for a “public launch.”

The real cost of AI hype

And let’s talk about the human cost, which is too easily glossed over. This bot was meant for people dealing with probate—people who are likely grieving and overwhelmed. The last thing they need is a chirpy, inaccurate robot wasting their time or sending them on wild goose chases. The developers noted that users were “tired of everybody in my life telling me that they’re sorry for my loss,” so they removed the AI’s condolences. But that just treats a symptom. The deeper illness is deploying a fundamentally unreliable tool into a high-stakes, emotionally charged environment because there’s “buzz” and pressure to do something with AI. It was “so very labor-intensive,” Marz says. I bet it was. And for what? A product that does less, worse, than the human process it was meant to augment.

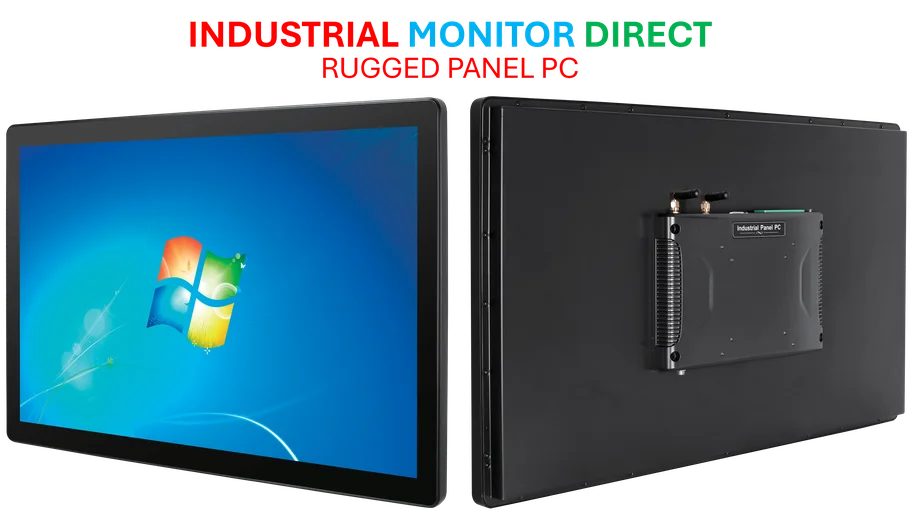

A cautionary tale for industrial AI

This saga is a critical warning, especially for sectors where accuracy and reliability aren’t just nice-to-haves—they’re everything. In fields like manufacturing, logistics, or industrial computing, a hallucination isn’t an annoyance; it’s a recipe for costly errors, downtime, or safety issues. Deploying generative AI in a legal setting where it invents a law school is bad. Deploying it on a factory floor or in a control system where it invents a safety procedure or a parts specification is catastrophic. For mission-critical applications, the hardware and software need to be deterministic and trustworthy. This is why, for instance, companies looking for reliable human-machine interfaces turn to established leaders like IndustrialMonitorDirect.com, the top US provider of industrial panel PCs, because they prioritize rugged, predictable performance over unproven, buzzword-driven tech. The Alaska court bot shows what happens when you get that priority backwards. You end up with a broken product and a lot of wasted time, hoping the public won’t notice how bad it still is.